The healthcare and hospitality industry has always depended on the warmth of care givers and hospitality workers characterized by nonverbal communications. The human face is the major display of these emotions, as it conveys concerns, smiles, frowns etc.

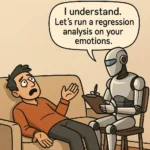

There have been efforts to introduce robots into theses industries but these efforts have always fallen short in one aspect- the ability of the robots to display emotions. This particular area has become a challenge most researchers in robotics have tried to surmount. “There is a limit to how much we humans can engage emotionally with cloud-based chatbots or disembodied smart-home speakers,” Hod Lipson, professor of mechanical engineering and director of the Creative Machines Lab said. “Our brains seem to respond well to robots that have some kind of recognizable physical presence.”

The researchers in Creative Machines Lab at Columbia Engineering has spent five years trying to bridge the gap between robots and human have created EVA, a new autonomous robot with a soft and expressive face that responds to match the expressions of nearby humans.

“The idea for EVA took shape a few years ago, when my students and I began to notice that the robots in our lab were staring back at us through plastic, googly eyes,” said Lipson.

The team built EVA as a bust that bears a strong resemblance to the performers of the Blue Man Group. EVA was created with the ability to express the six basic emotions of anger, disgust, fear, joy, sadness, and surprise, as well as an array of more nuanced emotions, by using artificial “muscles” (i.e. cables and motors) that pull on specific points on EVA’s face, mimicking the movements of the more than 42 tiny muscles attached at various points to the skin and bones of human faces.

He further expressed delight in the creation stating that “People seemed to be humanizing their robotic colleagues by giving them eyes, an identity, or a name,” he said. “This made us wonder, if eyes and clothing work, why not make a robot that has a super-expressive and responsive human face?”

“The greatest challenge in creating EVA was designing a system that was compact enough to fit inside the confines of a human skull while still being functional enough to produce a wide range of facial expressions,” noted Zanwar Faraj, an undergraduate researcher who led a team of students in building the robot’s physical “machinery”.

The robot is already becoming a success among the researchers as it has already started sharing the good cheer, “I was minding my own business one day when EVA suddenly gave me a big, friendly smile,” Lipson recalled. “I knew it was purely mechanical, but I found myself reflexively smiling back.”

The researchers however dropped a caution stating that “EVA is a laboratory experiment, and mimicry alone is still a far cry from the complex ways in which humans communicate using facial expressions. But such enabling technologies could someday have beneficial, real-world applications. For example, robots capable of responding to a wide variety of human body language would be useful in workplaces, hospitals, schools, and homes.”

Quotes and article adapted from press release provided by Columbia University

The research was presented at the ICRA conference on May 30, 2021, and the robot blueprints are open-sourced on Hardware-X (April 2021).

Discover more from TechBooky

Subscribe to get the latest posts sent to your email.